March 2026

Summary

This report summarises the findings of three randomised controlled trials testing the effectiveness of using analytics data to proactively identify and guide students with poor wellbeing towards support services. The trials at three higher education providers took place over the 2024-25 academic year and covered different nudge types (email or app-based) and ways of identifying students at-risk of poor wellbeing (classroom attendance, complex analytics model or wellbeing survey).

Aims

Concern regarding student mental health and wellbeing has grown significantly in recent years, particularly following the COVID-19 pandemic. Poor wellbeing in students has been associated with lower academic engagement, attainment and continuation. Hence, analytics systems may be ideally placed to proactively identify students with poor wellbeing to facilitate more proactive signposting and support before students’ needs become more serious thus avoiding greater distress and requiring less input from HEPs’ mental health and wellbeing teams.

Approach

Following an open call in 2024 TASO commissioned a team from the Policy Institute, King’s College London to carry out evaluations at three higher education providers – University of East Anglia (UEA), Northumbria University, and University of Staffordshire – who were using or planning to use analytics systems to proactively identify students with poor wellbeing and guide them to support services.

Key findings

The use of learning analytics systems to proactively identify students with poor attendance and direct them to wellbeing support services via nudge interventions had no measurable impact on students’ subsequent academic engagement or other outcomes relating to uptake of wellbeing support services.

Low survey response rates prevented the accurate measurement of wellbeing in the target population.

The trial at Northumbria University found little overlap in the population of students identified as having low wellbeing by an analytics system and those identified as having poor wellbeing by a wellbeing survey at enrolment.

Recommendations

Providers should carefully interrogate the use-case for analytics systems and how they can best address wellbeing and mental health issues in their context.

We encourage providers to test whether populations of ‘at-risk’ students identified by their analytics data overlap as expected with groups identified via other means, for example wellbeing surveys.

The nature of thresholds for triggering light-touch support can mean that some students are not supported quickly enough. Providers could consider evaluating the effectiveness of measuring student wellbeing at enrolment (when survey response rates are likely to be highest) and immediately guiding students with poor wellbeing to wellbeing support services.

Funding

This project was funded by a grant from the Evaluation Task Force (Cabinet Office/HM Treasury) and its Evaluation Accelerator Fund (2023-2025).

Acknowledgements

Impact Evaluation: The Policy Institute, King’s College London: Beti Baraki, Susannah Hume, Megan Liskey, Parnika Purwar, Michael Sanders

University of East Anglia: Fabio Arico, Kristina Garner, Helena Gillespie, Abass Isiaka, Matthew Aldrich

Northumbria University, Newcastle: Carly Foster, James Newham

University of Staffordshire: Vanessa Dodd, Kirstie Brookes

TASO: Eliza Kozman, Rob Summers, Mikayla Boginsky

Project background

Concern regarding student mental health and wellbeing has grown significantly in recent years, particularly following the COVID-19 pandemic. Approximately one in five students have a diagnosed mental health condition, and mental health has recently been identified as the most commonly cited reason for students leaving higher education (Sanders and Ellingwood, 2025).

Evidence suggests that students in higher education face heightened and multifaceted pressures involving interconnected elements (Jones and Bell, 2024). This has been attributed to several factors such as academic workload, isolation and loneliness, financial difficulties, and the challenges of adapting to a new environment (Worsley et al., 2020). In addition, a concerning trend is the disproportionate impact of mental health issues on specific student groups. For example, students from ethnic minority groups, care-experienced students, as well as those from low socio-economic backgrounds are more vulnerable to mental health challenges (Robertson et al., 2022).

Despite the magnitude of these issues, the proportion of students who access help for mental health difficulties is lower than the prevalence expected from the self-report data (McLafferty et al., 2024;Newham and Foster, 2025). However, higher education counselling and mental health teams are already struggling to meet rising demand despite increases in funding (Morrish, 2023; Pollard et al., 2021). Consequently, higher education institutions are in the precarious situation of needing to empower struggling (and potentially the most at-risk) students to access support and manage the caseload of students who already do access support.

There have been calls to embed wellbeing into the university system (Lewis, 2025; Spaeth, 2024; Newton, Dooris and Wills, 2016) and criticisms of approaches such as mental health awareness campaigns for failing to promote supportive communities (Finan and Foulkes, 2025). The sector is looking for better ways to address student wellbeing and mental ill health. The University Mental Health Charter (Student Minds, 2025) has encouraged providers to look at student wellbeing through a whole university approach, which prioritises the nurturing of caring communities in all aspects of student life. However, change can be slow in the face of the growing need for support and there is a lack of evidence on effective interventions and approaches.

TASO’s 2023 Student Mental Health Evidence Toolkit found an absence of robust evidence for the majority of intervention types in the UK, including those embedded in the curriculum, whole-provider approaches, peer support and psychoeducation. There is also almost no evidence on the impact of mental health and wellbeing interventions on other student outcomes such as attendance, continuation and attainment.

In response to this evidence gap, in 2024 TASO commissioned three evaluation projects covering a range of methodologies: randomised controlled trials (RCTs), quasi-experimental designs and theory-based evaluations. This pluralist approach gave us the best chance of identifying different types of initiatives aimed at supporting student wellbeing, so that we could address the gaps in the evidence and identify ways in which the sector can focus their resources more effectively.

The interventions which were evaluated address student wellbeing and encompass social and physical aspects alongside academic engagement. They do not treat mental health clinically as doing so would require trained professionals who are more likely to work in a healthcare setting; higher education providers are much more likely to offer wellbeing support. TASO has adopted definitions from the University Mental Health Charter (Student Minds, 2025) which understands ‘wellbeing’ as a state which allows an ‘individual to fully exercise their cognitive, emotional, physical and social powers’. Importantly, low wellbeing can exist without clinical mental illness (UUK, 2021). These definitions allow the sector to use a social model of care (Hogan, 2019) alongside clinical models.

Learning analytics and student wellbeing

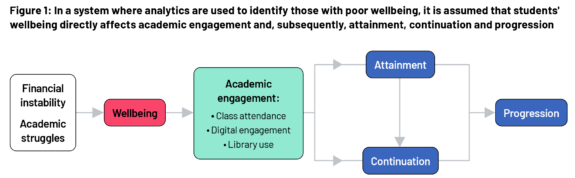

The use of analytics systems to proactively identify students with poor wellbeing makes the assumption that wellbeing itself is directly related to academic engagement, attainment and continuation (Figure 1). Therefore, poor wellbeing is likely to result in poor academic engagement. In turn, this implies that students with poor wellbeing who are directed to wellbeing support will re-engage with their studies.

It is this premise which underpins the rollout of light-touch ‘nudge’ interventions (typically emails and texts) which contain links to wellbeing support services for students with low engagement. However, more evidence is needed on this approach, particularly given its widespread and growing adoption in higher education.

We report on a suite of mixed-methods evaluations designed to understand the impact of light-touch nudge interventions for students with low engagement.

Evaluations

Three evaluations, in the form of randomised controlled trials, with accompanying implementation and process evaluations, were conducted at the University of East Anglia (UEA), Northumbria University and the University of Staffordshire. An overview of each trial is available in the appendices of this report, which include links to the associated trial protocols and technical reports.

The three trials covered:

- evaluation of different methods of using analytics to identify at-risk students – attendance at timetabled classes alone versus a multi-dimensional approach that combines data from the students record system with digital engagement and attendance monitoring

- a comparison of using the results of a wellbeing survey versus using analytics to identify poor wellbeing

- different methods of delivering a nudge (email or smartphone notification)

- whether the nudge contained a general signposting to wellbeing support services or specific wellbeing support.

The outcomes differed slightly at each institution (see Box 1) but covered attendance at timetabled classes, logins to the virtual learning environment (VLE), referral to support services and risk of non-continuation. Due to the likelihood of low response rates, wellbeing — which can only be collected via surveys — was treated as an exploratory outcome.

| Box 1 | UEA | Northumbria University | University of Staffordshire |

|---|---|---|---|

| Primary outcome | Attendance at timetabled classes | Student self-referral to support services | Attendance at timetabled classes VLE logins |

| Secondary outcome | VLE logins Engagement with nudge email | Learning analytics continuation prediction score Student withdrawal Engagement with nudge email | Engagement with support services Progress to tiered support |

| Exploratory | Wellbeing | Wellbeing |

Key findings

- The use of learning analytics systems to proactively identify students with poor attendance and direct them to wellbeing support services via nudge interventions had no measurable impact on students’ subsequent academic engagement across any of the trials.

- There was also no evidence of a causal link between these nudges and other outcomes relating to uptake of wellbeing support services.

- The trial at Northumbria University found little overlap in the population of students identified as having low wellbeing by an analytics system and those identified as having poor wellbeing by a wellbeing survey at enrolment.

- Low survey response rates prevented robust measurement of wellbeing in the target population.

- The assumed link between light-touch communication based on analytics data, wellbeing and academic engagement is not supported by the evidence from these trials. In interviews and focus groups, students and staff questioned how much engagement and wellbeing were related to each other, and whether the data in learning analytics systems captured sufficient nuance of student engagement.

- At the University of Staffordshire, some students reported finding the display of data in the institutional app (Beacon) confusing, which may have reduced their likelihood of acting on the information in the nudge.

Recommendations for practice

- As implemented in these evaluations, light-touch nudge interventions (emails and app notifications) did not improve the engagement of students identified as being high-risk (using analytics data or otherwise). Therefore, providers should carefully interrogate the use-case for analytics systems and how they can best address wellbeing and mental health issues in their context.

- However, there is widespread literature on the power of low-cost, light-touch nudges to improve outcomes across a range of domains, including education and health (see for example Damgaard and Nielsen, 2018). Providers should not dismiss the potential of such interventions to improve attendance and other outcomes, even if they have not been found effective in this context.

- Although the cost of individual nudges is small, there is a wider question about the potentially large cost of investing in analytics systems. Partners in our projects and advisory groups emphasised the value of such systems for centralising and formalising data collection arrangements in their institution and their use as monitoring systems. Therefore, we encourage institutional analysts to perform their own cost-benefit exercises which can provide clarity around the scope and use of systems which are needed in their local context.

- It is not clear from our findings that analytics data can effectively target students who are at risk of poor wellbeing. We encourage providers to test whether populations of at-risk students identified by their analytics data overlap as expected with groups identified via other means, for example wellbeing surveys. If and where this is not the case, providers should think about whether this challenges the assumptions behind the support they currently target at such groups.

- The nature of thresholds for triggering light- touch support can mean that some students are not supported quickly enough. Providers could consider evaluating the effectiveness of measuring student wellbeing at enrolment (when survey response rates are likely to be highest) and immediately guiding students with poor wellbeing to wellbeing support services.

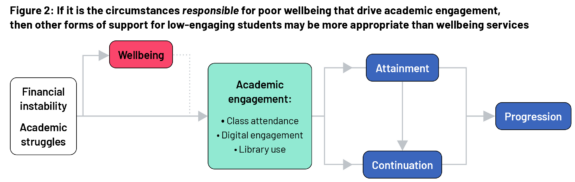

- Institutions need to understand which aspects of students’ poor wellbeing are driven by the institutional setting or other factors so the appropriate support can be provided. For example, if poor wellbeing is due to academic struggles, then skills-based support is likely to be more appropriate than wellbeing support in order to encourage re-engagement. This in turn leads to a different conceptualisation of the relationship between academic engagement and wellbeing (see Figure 2), where it is the circumstances responsible for poor wellbeing that drive poor academic engagement. Clarity around the position of wellbeing in the chain between student circumstances and outcomes is crucial to the design of appropriate support interventions.

- Careful consideration should be given to, and testing carried out on, the clarity and content of the data presented, using the best available evidence as a guide.

- Electronic communications with students should be optimised for devices with smaller screens to increase engagement with them.

- Careful consideration should be given to, and testing carried out on, the clarity and content of the data presented, using the best available evidence as a guide.

- Electronic communications with students should be optimised for devices with smaller screens to increase engagement with them.

Recommendations for evaluation

- Institutions should continue to optimise their analytics-prompted interventions through randomised testing of variations of their approach (A/B testing). This testing could be used to understand the engagement threshold for identifying at-risk students, the timing of any nudge or intervention, how it is delivered and the information it contains.

- Evaluation protocols should include a data management plan that indicates not only where outcome data will be securely stored, but exactly what data is required to be stored, and at what points during the evaluation cycle it should be obtained. For example, data from learning analytics systems can be changed retrospectively and the original data may not then be available.

- Evaluators should consider how best to measure wellbeing in their setting. If response rates to post-enrolment wellbeing surveys cannot be improved sufficiently to obtain an unbiased estimate, then measures such as academic engagement, attainment and continuation may have to be used. Though, given the findings of these evaluations, it will be important to establish a link between wellbeing and those measures in a given setting in order to have any confidence in their utility

Next steps

- Conducting a literature search and evidence synthesis to update the TASO Learning Analytics evidence toolkit page to help the sector determine if and how to implement analytics-based nudges to improve student outcomes.

- TASO will be conducting a workshop at the upcoming SMaRteN conference on developing and refining testable causal pathways to improve student wellbeing to help the sector effectively plan evaluation of activities to support student wellbeing.

Funding

This project was funded by a grant from the Evaluation Task Force (Cabinet Office/HM Treasury) and its Evaluation Accelerator Fund (2023-2025).

Case study 1: University of East Anglia (UEA)

This design has been pre-registered on the Open Science Framework (OSF)

For more details, please see the technical report.

Implementation and process evaluation: Fabio Arico, Kristina Garner,

Helena Gillespie, Abass Isiaka, Matthew Aldrich.

Impact evaluation: Beti Baraki, Susannah Hume, Megan Liskey, Parnika Purwar, Michael Sanders.

Intervention

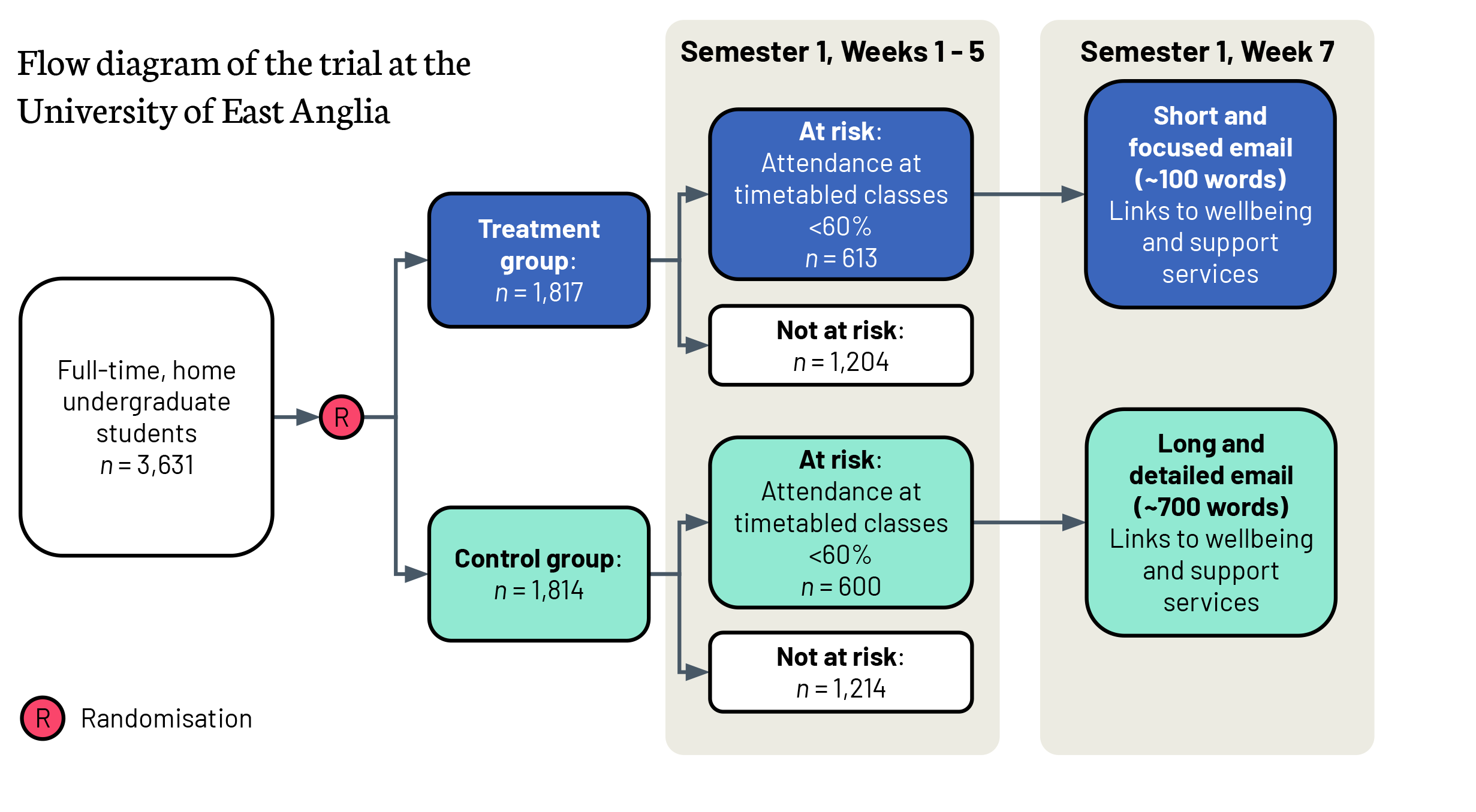

The two-armed randomised controlled trial at UEA involved 3,631 full-time undergraduate students from five schools of study who were randomly allocated to the treatment group or control group. Students

in both arms of the trial who had less than 60% attendance at timetabled sessions by week five of the first semester received an email nudging them to wellbeing support services. The email to those in the control group was a long ~700-word email (business as usual) while those in the treatment group received a much shorter 100-word email. Both emails nudged students to wellbeing support services and included a trackable link for students to indicate that they had read the email. UEA were concerned that the longer, business-as-usual email was not being read and wanted to see if a shorter email was more likely to engage students with support services.

The primary outcome was taught-session attendance post-intervention. Secondary outcomes were: the proportion of days in which a student accessed the virtual learning environment (VLE); whether students clicked on the trackable links to support services, indicating that they had received the email; and wellbeing.

Note: The Office for National Statistics uses four survey questions to measure personal wellbeing, see https://www.ons.gov.uk/peoplepopulationandcommunity/wellbeing/methodologies/surveysusingthe4officefornationalstatisticspersonalwellbeingquestions

Impact evaluation

Primary outcome: Attendance at timetabled classes

There was no additional impact of sending a shorter email than a longer email on subsequent attendance.

Secondary outcome: Logins to the virtual learning environment (VLE)

There was no additional impact of sending a shorter email than a longer email on the proportion of days that students subsequently logged on to the VLE.

Secondary outcome: Engagement with email

Students who received the shorter email were more likely to click on the embedded link to acknowledge that they had read it (28%, n=174) than those who received the longer email (8%, n=45) but very few students indicated that they had read it. If all students had been sent the shorter email we would have expected an additional 125 students in the control group to have acknowledged receipt of the email.

Exploratory outcome: Wellbeing

Due to the very low response rate to the wellbeing survey, it is not possible to determine whether or not the intervention had any impact on students’ wellbeing.

Implementation and process evaluation

Staff at UEA used institutional data and interviews with staff and students to understand the intervention in terms of reach, fidelity and exposure.

| Data collection tool | Data collection tool |

|---|---|

| Student interviews | 2 students |

| Staff interviews | 1 Senior Engagement Team member 2 Senior Advisers |

| Link to form to indicate students had read the email | 190 students |

| UEA wellbeing survey | 8 students |

Reach

Regardless of whether students received the shorter or longer email, approximately three-quarters of students who did acknowledge receipt read the email on a mobile device. Communications with students should be optimised for mobile devices.

The threshold for receiving the nudge email in week 7 (less than 60% class attendance in weeks 1-5) meant that many students with poor attendance (but above 60%) did not receive the email, and those with poor attendance from the beginning of term were nudged 7 weeks later. The threshold and delay in nudging was discussed in the staff and student interviews. Staff recommended a flexible approach to setting thresholds that reflects the needs of students on different courses, but there was a feeling that it could be higher and earlier:

“I would love to have it [the threshold] higher, if I’m honest… But there’s a real-life consequence, which … [is that we would then] … ask advisers to do more work with those students.” [staff]

“But there are plenty of places [time periods in the term] where… we need to put extra pressure on people turning up. For me the very start of term is absolutely that point that we should do that.” [staff]

“If you don’t attend classes for the first two weeks, you start to feel like you can’t attend anymore.” [student]

The intent of the email intervention (whether short or long) is to re-engage students with their studies by offering wellbeing support. However, both staff and students noted that engagement is not just about attendance, and there are multiple reasons why a student might stop engaging with their studies that are associated with, but not directly driven by, poor wellbeing:

“I have had a ‘one-off call with a wellbeing adviser’. It didn’t help much because I later realised it was meant for immediate issues, while my struggles were long-term academic challenges.” [student]

Fidelity

There were mixed views about the short email format. While it was more accessible on mobile devices, it did not provide clear guidance about next steps. Staff found the email format lacked urgency and was not firm enough, and that an email alone may not be sufficient to trigger behavioural change in disengaged students.

Exposure

Students discussed different reasons for not engaging with the wellbeing support. The importance of a personal connection with those providing support was emphasised by one student:

“I don’t want to talk about my struggles with someone I have no prior relationship with.” [student]

Whereas another student, knew from prior experience that the signposted services were not appropriate for them at that point:

“… I didn’t click on the link. I was already aware of wellbeing services because I looked into them last year. I wasn’t in distress, so I didn’t feel the need to explore further.” [student]

While one student expressed support for “forcing a meeting” because “A lot of students, myself included, won’t actively seek help, even if we need it” and meeting with “an engagement or wellbeing officer, forces you to address the problem”, there were different views from the staff involved about whether that would be effective:

“So far, I’ve organised and held around 500 student meetings. Some students say they will re-engage after receiving support, but in reality, it’s about 50/50 whether they follow through. For example, I recently emailed a student who missed two of my classes despite our previous conversation about improving their attendance. They apologised, but it’s a pattern I see often.” [staff]

Case study 2: Northumbria University

This design has been pre-registered on the Open Science Framework (OSF)

For more details, please see the technical report.

Implementation and Process Evaluation: Carly Foster, James Newham.

Impact Evaluation: Beti Baraki, Susannah Hume, Megan Liskey, Parnika Purwar, Michael Sanders.

Intervention

Upon enrolment (or re-enrolment) at Northumbria University, all students are invited to fill out a wellbeing questionnaire (WHO-52). In 2024, 13,122 full-time, home undergraduate and postgraduate students consented to their analytics data and wellbeing survey data being used by the university to help identify students who needed support. The analytics system uses a combination of data from the student records system and on-course real-time data including attendance at timetabled classes, logins to the virtual learning environment and engagement with the library (reading list clicks, loans and access) to provide a continuation prediction likelihood for each student.

Note: The WHO-5 is a self-report instrument measuring mental wellbeing. It consists of five statements relating to the past two weeks. Each statement is rated on a six-point scale, with higher scores indicating better mental wellbeing.

The trial at Northumbria University was a three-arm randomised controlled trial with 4,367 students in each of treatment group 1 (TG1) and treatment group 2 (TG2), and 4,388 students in the control group. The method of identifying students at risk of poor wellbeing differed between TG1 and TG2. Students in TG1 with a WHO-5 score lower than 50 were deemed to have poor wellbeing; in TG2 the criteria for poor wellbeing was those students with a predicted continuation likelihood lower than 75%. In both TG1 and TG2 at-risk students were randomly allocated to receive either an email signposting to guided online self-help services or to one-to-one support. Students not at risk received a generic email. Students in the control group did not receive an email.

The primary outcome was whether or not students referred themselves to the signposted support services. Secondary outcomes included: whether or not students referred themselves to the specific support service they were referred to; their post- nudge analytics continuation prediction score; and whether or not a student withdrew from their studies.

Evaluation

Impact evaluation

Primary outcome: Student self-referral to support services

There was no impact of the method of identification of at-risk students on the average probability of students self-referring to support services in the university compared with those in the control group (who received no nudge).

Secondary outcome: Change in learning analytics continuation prediction score

There was no impact of a nudge generated by either at-risk identification method (WHO-5 or analytics) on students’ learning analytics continuation prediction score.

Secondary outcome: Withdrawal

There is no evidence of an impact of identification method on the likelihood of withdrawal from studies.

Secondary outcome: Engagement with nudge email

There was weak evidence that students in TG1 (at risk identified using WHO-5) were more likely to click on the links to support services (2.4%; 106/4,367) than students in TG2 (at risk identified using analytics; 1.7%; 76/4,367). This weakness may be due to the timeframe between completing the wellbeing survey and receiving the nudge (between four and 12 weeks) but it is consistent with the idea that nudges targeted at those with poor wellbeing prior to the academic year are more likely to be in need of wellbeing support than those identified by analytics during the academic year.

Highlights from the implementation and process evaluation

The team at Northumbria University conducted a focus group with staff directly involved in delivery of mental health and wellbeing services to students to understand the implementation of the nudges. The team also analysed quantitative data to understand the effects of participation on the sample who took part in the study, how students engaged with the nudges, and the relationship between offering wellbeing support and a students’ propensity to seek help.

The trial used a complex opt-in consent process, which limited the reach of the intervention. Out of 24,575 enrolled students, only 54% (13,122) consented to be part of the research. Consent rates were associated with student characteristics such as gender; female students were more likely than male students to consent to be part of the research (59% vs 51%). This means that the sample for our analysis was skewed and may affect the generalisability of our findings.

Across all 13,122 students in the trial, 3,093 (24%) met the definition of at-risk due to their wellbeing survey score or analytics continuation prediction score. Of those, only 261 (8%) met the definition of at-risk using both measures.

Engagement with the email nudges was low; 18% (1,544) of students who received an email opened it. However, engagement with nudges by at-risk students was much higher; 48% (544) of at-risk students who received a nudge opened the email.

In terms of implementation issues, staff noted the nudges were not timed well as they were sent around half-term and staff cover was limited.

Staff appreciated the intervention and found it potentially useful for students, but acknowledged there are ongoing capacity issues and that they would not be able to meet the needs of students without increased resources.

Case study 3: University of Staffordshire

This design has been pre-registered on the Open Science Framework (OSF)

For more details, please see the technical report.

Implementation and Process Evaluation: Vanessa Dodd, Kirstie Brookes.

Impact Evaluation: Beti Baraki, Susannah Hume, Megan Liskey, Parnika Purwar, Michael Sanders.

Intervention

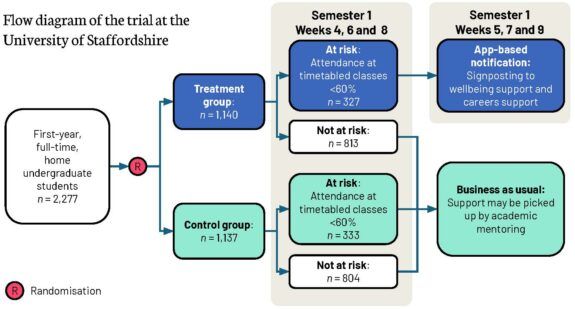

In contrast with the other two trials, the nudge at the University of Staffordshire was delivered by notifications from an institutional smartphone app, Beacon. This app is used by students to access

timetables and other relevant information.

A cohort of 2,277 first-year full-time undergraduate home students were randomly allocated to either the control group (1,137) or the treatment group (1,140). At three points (weeks 4, 6 and 8) during the first semester, student attendance over the prior two weeks was assessed. Those students in the treatment group with attendance lower than 60% received a notification on the app in the following week (weeks 5, 7 and 9). The notification contained signposting to various support services, including wellbeing support and careers support. Students in the control group, or students who exceeded the attendance threshold, did not receive a notification. Additional support for students in the treatment and control groups, whether they were above or below the threshold, may have been picked up by academic mentors (also known as personal academic tutors).

Impact evaluation

Primary outcome: Attendance at timetabled classes

There was no significant impact on attendance at timetabled classes in the second week after the intervention.

Primary outcome: Logins to the virtual learning environment

There was no significant impact on logins to the virtual learning environment in the second week following the intervention.

Secondary outcome: Engagement with support services

There was no significant difference between students in the treatment and control groups in the likelihood of students accessing support services.

Secondary outcome: Progression to tiered support

There was, however, weak evidence that students who received a nudge were more likely to progress to tiered support where the university is more proactive in contacting students due to persistent low engagement.

Exploratory outcome: Wellbeing

Due to low response rates to the student wellbeing survey, it was not possible to investigate the effect of the intervention on wellbeing status.

Highlights from the implementation and process evaluation

Staff at the University of Staffordshire used institutional data and interviews with staff and students to understand the intervention in terms of whether or not the nudges were run as intended (adherence), who received the nudge (reach) and stakeholder perspectives.

| Data collection tool | Achieved sample size |

|---|---|

| Student interviews | 9 participants (5 from the treatment group, 4 from the control group) |

| Delivery staff interviews | 5 participants |

Adherence

Although the notification was implemented in line with the design of the randomised controlled trial and no students in the control group received the notification, a data handling error resulted in a smaller proportion of the treatment sample receiving the second notification than were eligible to receive it, meaning the impact of receiving a nudge is likely to be underestimated.

Reach

Over a quarter (29%; n=660) of both the treatment and control sample met the threshold to generate a notification at least once during the intervention. Of these, 327 were in the treatment group and therefore received at least one notification. There were significant differences in the likelihood of being sent a notification between students with different ethnic backgrounds. Black students were least likely to receive a notification (25/108; 23%), whereas students with mixed heritage were most likely to receive one (24/60; 40%).

Stakeholder perspectives

Students responded positively to the content and tone of the nudge, finding them motivating, supportive and raising their awareness about existing support services:

‘I quite liked it… because it made me like sort of keep up with my attendance. Made me want to attend lessons more.”

Although staff did flag some resistance:

“There was anger [from one student]. There was anger there. Why are you, you know, watching me? Yeah, but all the others tend to be they were quite responsive and looking for support.”

Some students found the display of engagement data in the Beacon app confusing and unclear; this may have affected their willingness or ability to act on the nudge. Additionally, some students also suggested they would have preferred the nudge notifications to be supplemented with

an additional mode of communication such as email, as they were seen as more personalised and likely to be read over a notification.

Staff and some students raised concerns about relying solely on attendance data for information on student wellbeing or academic performance because disengagement happens for many reasons not limited to wellbeing.

References

Damgaard, M. T., and Nielsen, H. S. (2018) Nudging in education. Economics of Education Review, 64, 313-342. https://doi.org/10.1016/j.econedurev.2018.03.008

Finan, S. and Foulkes, L. (2025) Disingenuous “box‐ticking”: Undergraduate students’ attitudes towards University Mental Health Awareness Efforts. British Educational Research Journal. https://doi.org/10.1002/berj.70042

Jones, C. S. and Bell, H. (2024) Under increasing pressure in the wake of COVID-19: A systematic literature review of the factors affecting UK undergraduates with consideration of engagement, belonging, alienation and resilience. Perspectives: Policy and Practice in Higher Education, 28(3), 141-151. http://dx.doi.org/10.1080/13603108.2024.2317316

Hogan, A. J. (2019) Social and medical models of disability and mental health: evolution and renewal. CMAJ: Canadian Medical Association journal = Journal de l’Association Medicale Canadienne, 191(1), E16–E18.

https://doi.org/10.1503/cmaj.181008

Lewis, B. (2025) There’s no magic wand for student wellbeing. Wonkhe. https://wonkhe.com/blogs/theres-no-magic-wand-for-student-wellbeing/ [Accessed 16 February 2026]

McLafferty, M., O’Neill, S. and Murray, E. K. (2024) Policy Brief Student Mental Health: College Student Mental Health and Wellbeing. https://pure.ulster.ac.uk/en/publications/policy-brief-student-mental-health-college-student-mental-health-/ [Accessed 16 February 2026]

Morrish, A. (2023) HE Providers’ Policies and Practices to Support Student Mental Health. London: Department for Education. https://assets.publishing.service.gov.uk/media/646e0c027dd6e70012a9b2f0/HE_providers__policies_and_practices_to_support_student_mental_health_-_May-2023.pdf [Accessed 16 February 2026]

Newham, J., and Foster, C. (2025) Nudging university students to counselling and mental health services: staff perspectives on the implementation of a proactive well-being analytics intervention. Perspectives: Policy and Practice in Higher Education, 30(1), 58–67. https://doi.org/10.1080/13603108.2025.2565342

Newton, J., Dooris, M. and Wills, J. (2016) Healthy universities: an example of a whole-system health-promoting setting. Global Health Promotion, 23(1), 57-65. https://doi.org/10.1177/1757975915601037

Pollard, E., Vanderlayden, J., Alexander, K., Borkin, H. and O’Mahony, J. (2021) Student mental health and wellbeing. London: Department for Education. https://dera.ioe.ac.uk/id/eprint/38141/ [Accessed 16 February 2026]

Robertson, A., Mulcahy, E. and Baars, S. (2022) What works to tackle mental health inequalities in higher education? The Centre for Transforming Access and Student Outcomes in Higher Education (TASO). https://cdn.taso.org.uk/wp-content/uploads/2022-05-17_What-works-tackle-mental-health-inequalities-he_TASO.pdf [Accessed 16 February 2026]

Sanders, M. and Ellingwood, J. (2025) Student mental health in 2024: How the situation is changing for LGBTQ+ students. King’s College London, the Policy Institute and Transforming Access and Student Outcomes in Higher Education (TASO). https://cdn.taso.org.uk/wp-content/uploads/2025-02-20_Student-mental-health-in-2024-How-the-situation-is-changing-for-LGBTQ-students_TASO.pdf [Accessed 16 February 2026]

Spaeth, E. (2024) The role of compassion in inclusive learning and teaching practice. In Developing pedagogies of compassion in higher education: A practice first approach, 61–76. Cham: Springer Nature Switzerland. Student Minds (2025) Defining our terms. University Mental Health Charter. https://hub.studentminds.org.uk/modules/umhc-framework-defining-our-terms/ [Accessed 16 February 2026]

Universities UK (UUK) (2021) Stepchange: mentally healthy universities. https://www.universitiesuk.ac.uk/sites/default/files/field/downloads/2021-07/uuk-stepchange-mhu.pdf [Accessed 16 February 2026]

Worsley, J., Pennington, A. and Corcoran, R. (2020) What interventions improve college and university students’ mental Health and wellbeing? A review of review-level Evidence. What Works Centre for Wellbeing. https://whatworkswellbeing.org/wp-content/uploads/2020/03/Student-mental-health-full-review.pdf [Accessed 16 February 2026]