March 2026

Summary

This report summarises the findings of two theory-based evaluations of wellbeing interventions involving small cohorts. The studies in the report included the Coaching the Gap programme at Leeds Trinity University evaluated using a realist approach and The Boundary Spanner Project at St. Mary’s University, evaluated using the Qualitative Impact Protocol (QuIP). The evaluations find evidence in support of the interventions and the report makes recommendations regarding the importance of building relationships and the need for wellbeing interventions to be embedded in a broader network of support.

Aims

The project aimed to evaluate two wellbeing interventions that addressed the gaps in the evidence found in the TASO’s Student Mental Health Toolkit. The project also aimed to provide examples for shared learning of two different theory-based approaches evaluating interventions with small cohorts.

Approach

Following an open call, TASO selected and funded two higher education providers (HEPs) to participate in a project that evaluates student wellbeing interventions delivered to small cohorts. The providers participating in this project were matched with two different evaluators who used different theory-based methods to evaluate the interventions.

Key findings

Though the individual findings from each project was particular to the intervention context, the project found some key overlapping themes:

- Interventions that build relationships fill a vital gap for students who have a lower sense of belonging or are at risk of dropping out.

- The barriers to academic engagement will be present in the intervention so some students may need additional, personal support with their initial engagement.

- Implementation issues become evaluation issues, particularly when targeting interventions to specific student groups.

- Improving wellbeing improves course engagement but the interventions alone do not enable change.

- Wellbeing interventions work within a broader network of support. Standalone interventions are at risk of becoming unsustainable, particularly in the difficult financial climate.

Findings from each evaluation can be found in the report.

Recommendations

Both interventions were recommended to continue, with some implementation changes regarding targeting and sustainability and suggestions to follow up with participating students.

Recommendations for future practice

Practitioners designing interventions are invited to consider:

- how to build the conditions to establish supportive relationships.

- inviting staff to interventions where appropriate to gather buy-in and collaboration.

- the resources needed to support staff to implement the intervention and to provide consistent support for students.

- the students with more challenging life circumstances and the support they need to engage with the intervention itself.

- how interventions are targeted and how targeting shapes the intervention.

- how wellbeing interventions are integrated into other support and academic services.

Recommendations for future evaluation

Evaluators considering theory-based approaches or work with smaller cohorts are invited to consider:

- theory-based evaluations for not only measuring the effectiveness of interventions with small cohorts but also understanding the wider contextual challenges and factors that underpin how an intervention works.

- the time and resources needed to develop a detailed theory of change.

- how to implement peer researchers in a meaningful way. Engaging peer researchers requires time, training and budget.

- student engagement in evaluation as a parallel process to engaging students with the intervention itself, where flexibility is key.

Acknowledgements

Bath Social & Development Research: Rebekah Avard, Fiona Remnant

Tharani Learning and Research: Amira Tharani, Emma Roberts

Leeds Trinity University: Ruth Squire, Syra Shakir

St Mary’s University, Twickenham: Michael Hobson, Howard Bateman

TASO: Tatjana Damjanovic, Omar Khan

Project background

Concern regarding student mental health and wellbeing has grown significantly in recent years, particularly following the COVID-19 pandemic. Approximately one in five students have a diagnosed mental health condition, and mental health has recently been identified as the most commonly cited reason for students leaving higher education (Sanders and Ellingwood, 2025).

Evidence suggests that students in higher education face heightened and multifaceted pressures involving interconnected elements (Jones and Bell, 2024). This has been attributed to several factors such as academic workload, isolation and loneliness, financial difficulties, and the challenges of adapting to a new environment (Worsley et al., 2020). In addition, a concerning trend is the disproportionate impact of mental health issues on specific student groups. For example, students from ethnic minority groups, care-experienced students, as well as those from low socio-economic backgrounds are more vulnerable to mental health challenges (Robertson et al., 2022).

Despite the magnitude of these issues, the proportion of students who access help for mental health difficulties is lower than the prevalence expected from the self-report data (McLafferty et al., 2024; Newham and Foster, 2025). However, higher education counselling and mental health teams are already struggling to meet rising demand despite increases in funding (Morrish, 2023; Pollard et al., 2021). Consequently, higher education institutions are in the precarious situation of needing to empower struggling (and potentially the most at risk) students to access support and manage the caseload of students who already do access support.

There have been calls to embed wellbeing into the university system (Lewis, 2025; Spaeth, 2024; Newton, Dooris and Wills, 2016) and criticisms of approaches such as mental health awareness campaigns for failing to promote supportive communities (Finan and Foulkes, 2025). The sector is looking for better ways to address student wellbeing and mental ill health.

The University Mental Health Charter (Student Minds, 2025) has encouraged providers to look at student wellbeing through a whole university approach, which prioritises the nurturing of caring communities in all aspects of student life. However, change can be slow in the face of the growing need for support and there is a lack of evidence on effective interventions and approaches.

TASO’s 2023 Student Mental Health Evidence Toolkit found an absence of robust evidence for the majority of intervention types in the UK, including those embedded in the curriculum, whole-provider approaches, peer support and psychoeducation. There is also almost no evidence on the impact of mental health and wellbeing interventions on other student outcomes such as attendance, continuation and attainment.

In response to this evidence gap, in 2024 TASO commissioned three evaluation projects covering a range of methodologies: randomised controlled trials (RCTs), quasi-experimental designs and theory-based evaluations. This pluralist approach gave us the best chance of identifying different types of initiatives aimed at supporting student wellbeing, so that we could address the gaps in the evidence and identify ways in which the sector can focus their resources more effectively.

The interventions which were evaluated address student wellbeing and encompass social and physical aspects alongside academic engagement. They do not treat mental health clinically as doing so would require trained professionals who are more likely to work in a healthcare setting; higher education providers are much more likely to offer wellbeing support. TASO has adopted definitions from the University Mental Health Charter (Student Minds, 2025) which understands ‘wellbeing’ as a state which allows an ‘individual to fully exercise their cognitive, emotional, physical and social powers’. Importantly, low wellbeing can exist without clinical mental illness (UUK, 2021). These definitions allow the sector to use a social model of care (Hogan, 2019) alongside clinical models.

Two wellbeing projects

Following an open call, TASO selected and funded two higher education providers to participate in a project that evaluates student wellbeing interventions delivered to small cohorts. Our previous research (TASO, 2024) indicated that interventions for small cohorts of students are being less rigorously evaluated, if at all, than those for larger cohorts because they do not lend themselves easily to experimental methods and due to a lack of resourcing. Based on the findings of an evidence review of student mental health interventions, we found gaps in the evidence for targeted interventions and interventions that engage in recreational activities such as arts or sports (TASO, 2023). This project addresses some of these gaps by evaluating a recreational intervention and a coaching intervention.

The providers participating in this project were matched with two different evaluators to explore the impact of their interventions using theory based evaluation methods. These methods are well suited to evaluating interventions working with small cohorts as they are adaptable to changes in the delivery of the programme. To support the sector in considering theory-based evaluation in the future, two of the more commonly used methods – realist evaluation and contribution analysis – were suggested to the evaluators who then worked with the TASO team to decide on the most appropriate evaluation design for each intervention.

The recreational intervention provided wellbeing support in art sessions and gym sessions at St Mary’s University, Twickenham. The coaching intervention aimed to support students from the global majority1 at Leeds Trinity University, and used the coaching services of an external expert provider. Though both sessions were open to all, additional marketing was done by the wellbeing and disability services team, and at a welcome programme supporting new widening participation students to prepare for university. See below for an overview of the interventions evaluated as part of this project.

Note: The term ‘global majority’ has been used here as determined by the evaluator team to refer to people who are Black, Asian, Brown, mixed heritage, indigenous to the global south, or have been racialised as ‘ethnic minorities’.

Table 1: An overview of the interventions evaluated and their evaluation methods

| Intervention name | The Boundary Spanner Project | Coaching the Gap |

| Higher education provider | St Mary’s University, Twickenham | Leeds Trinity University |

| Intervention type | Recreational activities | Coaching |

| Implementation | Gym and arts drop-in sessions | 12 sessions per student over the academic year and proactive student contact |

| Intended beneficiaries | Widening participation students | Student from the global majority at risk of dropping out |

| Theory-based evaluation method | Qualitative Impact Protocol (QuIP) | Realist evaluation |

Theory-based evaluation

In these projects, the evaluators used two different methods that fall under the umbrella of theory-based approaches to evaluation. There are several different types of theory-based methods and guidance. A selection of these can be found on the TASO website, under our resources on evaluations for small cohorts. To evaluate the recreational intervention, the evaluators used the Qualitative Impact Protocol (QuIP); for the coaching intervention, a realist evaluation was conducted.

A theory-based approach is useful in cases where a clear comparator group is difficult to identify or involve in the evaluation. This is sometimes the case with interventions designed for small cohorts. It can also be appropriate in contexts where the intervention is complex in itself, with multiple arms to the delivery or part of a systems change. Theory based methods are flexible and adaptable. They can be useful for cases in which the outcomes may not be clear from the start or where an intervention is likely to change during implementation. Theory-based evaluations require expertise and can be time consuming but they provide rich, detailed and nuanced insights into how interventions make change.

An evaluation using a theory-based approach begins with a comprehensive theory of change, or programme theory, which sets out the causal mechanisms by which the intervention is expected to achieve its outcomes. The research questions are then developed as ways to test the causal mechanisms identified in the theory of change. Different theory-based methods will differ in the ways in which they account for and investigate the relationships between the outcomes, contexts and assumptions in the theory of change. Developing the theory of change is an iterative process, and often includes many different actors involved in the intervention.

Theory-based approaches are method neutral and often use a combination of qualitative and quantitative methods. The data collection process measures change by testing the causal pathways, sometimes referred to as causal chains, which are a sequence of factors or events that are considered to lead to a specific outcome. As the Magenta Book points out, theory-based methods: ‘can investigate net impacts by exploring the causal chains thought to bring about change by an intervention. However, they do not provide precise estimates of effect sizes [in the way that quantitative impact evaluations can]’ (HM Treasury, 2020, p. 43) .

The theory of change establishes the causal claims by outlining the steps and factors involved in linking the intervention to its expected outcomes. There may be multiple factors at play, resulting in multiple causal pathways that are then tested in the data collection phase. Different theory-based methods may test these causal pathways in different ways, but they all work to establish plausible causal claims by explaining how the multiple causal pathways work in relation to the outcomes and to one another. This is known as generative causation (Befani, 2012; Befani and Mayne, 2014; Pawson, 2007). For more information on causation in theory-based approaches, see TASO’s methodological guidance on impact evaluations with small cohorts (2022, pgs. 8-20).

In this project, both the QuIP evaluation of the recreational intervention and the realist evaluation of the coaching intervention use predominantly qualitative methods for data collection in the form of student and key stakeholder interviews, with some limited quantitative data collection of administrative data. However, QuIP interviews and realist interviews are conducted differently. The two methodologies also had a different approach to testing the causal pathways, and eventually analysing the data. (For more detail on the particular methods in this report, please see Case study 1: A QuIP evaluation of the Boundary Spanner Project at St Mary’s University, Twickenham and Case study 2: A realist evaluation of Coaching the Gap at Leeds Trinity University).

The evaluations in this project also both involved peer researchers, albeit in different capacities. In both cases, the peer researcher perspective was considered a valuable addition to the research, providing insights and sensitivities to the students’ particular contexts that staff and evaluators could not have.

Key findings

Interventions that build relationships fill a vital gap for students who have a lower sense of belonging or who are at risk of dropping out.

- Both interventions showed that a network of relationships is an important factor in improving wellbeing. Feeling supported by trusted university staff increased students’ sense of belonging and purpose. Building relationships through the interventions enabled students to build other important relationships with peers and academic staff, thereby improving their help-seeking behaviours.

The barriers to academic engagement will also be present in the intervention, so some students may need additional, personal support with their initial engagement.

- The reason why a student might benefit from an intervention can be the barrier preventing initial engagement. Providing initial personal support such as signposting and practical tools improved student participation. This showed that low wellbeing can be influenced by many factors and the growing complexity of students’ lives and the difficult socio-economic climate contributes significantly to student wellbeing.

Implementation issues become evaluation issues, particularly when targeting interventions to specific student groups.

- Issues such as the variation in targeting and implementation meant that the evaluations had to adapt to unforeseen contexts. This meant smaller but more varied samples than expected. This highlights the need for a reflexive evaluation approach that adapts to changes in implementation and process.

Improving wellbeing improves course engagement but the interventions alone do not enable change.

- While improved social wellbeing was a key factor in improving course engagement, other contextual factors such as personal and financial circumstances were also determinants of a student’s academic engagement. This indicates that wellbeing interventions are most effective as part of a comprehensive, holistic approach to nurturing students’ development, including financial and academic support, as well as the availability of social events and physical activity.

Wellbeing interventions work within a broader network of support. Standalone interventions are at risk of becoming unsustainable, particularly in the difficult financial climate.

- Wellbeing interventions that foster relationships rely on the time and resources of specific dedicated staff members, which risks the sustainability of the intervention. While the evaluations conducted in this project did not measure the direct impact of the financial pressures on students or staff, they were heavily informed by the current economic pressures in higher education. Indeed, financial pressures affected both the intervention implementation and the management of the evaluation projects themselves.

Recommendations

Both interventions were recommended to continue, with some implementation changes regarding targeting and sustainability, and suggestions to follow up with participating students.

Recommendations for future practice

- Consider how to build the conditions to establish supportive relationships, whether they are between staff and students or peer support networks.

- Consider inviting staff to participate in interventions where appropriate, to gather buy-in and collaboration.

- Consider the resources needed to support staff to implement the intervention and to provide consistent support for students. This would require senior level buy-in, job stability and the implementation of resources in terms of time, budgets and departmental connections to enable interventions to be embedded.

- Consider the benefits of the wellbeing activities themselves, while keeping in mind that different activities will suit different students. There is evidence to suggest that recreational activities and coaching enrich students with additional skills and can relieve stress, but no single activity suits all students.

- Consider the support around the intervention itself. Some students with more challenging life circumstances, whether it is financial instability, commuting, working or a disability, may need help to initially engage with the intervention and may need ongoing additional support.

- Consider how interventions are targeted. Targeting should inform the intervention at all stages, from recruitment strategies to maintaining engagement and tailoring the implementation process. Consider also how targeting shapes the intervention if multiple groups or broad categories of students are targeted simultaneously.

- Consider how wellbeing interventions are integrated into other support and academic services. This may improve engagement as well as the sustainability of the intervention.

Recommendations for future evaluation

- Consider theory-based evaluations for not only measuring the effectiveness of interventions with small cohorts but also understanding the wider contextual challenges and factors that underpin how an intervention works.

- Consider the time and resources needed to develop a detailed theory of change. Longer run-in periods allow for more detail and clarity relating to the intervention, the data collection and the underlying assumptions. Future evaluations using theory-based approaches may need longer than a single academic year.

- Consider how to implement peer research in a meaningful way. Engaging peer researchers requires time, training and budget.

- Consider student engagement in evaluation as a parallel process to engaging students with the intervention itself. Flexibility is key; there is no one size fits all and consideration of student circumstances, such as juggling work and study or having a disability, requires the use of multiple ways of contacting students, whether that is via peers, WhatsApp groups, emails or lecturers.

Next steps

- The higher education providers, Leeds Trinity University and St Mary’s University, Twickenham, will be reviewing the findings of their respective reports. Both providers anticipate continuing the interventions, with some changes to improve the implementation process.

- TASO will be formulating strategies and support for smaller providers who may be working with smaller cohorts.

- TASO will also be considering how participatory methods may bolster evaluations, in order to build on the work of the peer researchers in these projects.

- For future research, a major question is how to involve the students who do not engage with an intervention. This population may offer valuable insights into the mechanisms of why an intervention does not work for some students.

- These evaluations have shown that more research is needed into the complexity of students’ lives in the current socio-economic and cultural context. Future research should investigate the mechanisms behind system-change approaches to wellbeing, where financial, social, physical and emotional wellbeing are addressed holistically. This research may also help to redefine the definition of who a student is, given the growing population of students with work and caring responsibilities.

Case study 1: A QuIP evaluation of the Boundary Spanner Project at St Mary’s University, Twickenham

This design has been pre-registered on the Open Science Framework (OSF).

For more details, please see the technical report.

Evaluator: Rebekah Avard and Fiona Remnant (Bath Social & Development Research (SDR))

Project contributors:

- St Mary’s Twickenham: Michael Hobson, Howard Bateman and Nikki Anghileri

- Peer researchers: Bidisha Saikia and Nahiyan Rashid

Intervention

Two different types of recreational activities were offered as part of this intervention. ‘Reprezent Health’ sessions were twice-weekly gym sessions, facilitated by academic and wellbeing staff as well as student coaches. ‘Hang out and paint’ were weekly art sessions, with a range of art materials at hand and classical music. These were facilitated by a member of the wellbeing team.

Both activities were drop-in sessions delivered in person, on campus, at times in the week when students were anticipated to have in-person teaching. Students could join one or both activities. Importantly, university staff were also invited to join if they had the time. The activities shared a student-focused ethos in which conversations during the sessions were dictated by the students. They often, however, focused on topics impacting students’ wellbeing such as academic overwhelm, time management, adapting to university life, and making friends.

The intervention was initially aimed at commuter students and those from a Black, Asian and minority ethnic background. However, on implementation, the intervention was open to all and there was no recruitment strategy to target those students specifically, though additional marketing was done at some widening participation welcome events and through wellbeing services.

Evaluation

Qualitative Impact Protocol (QuIP) assesses the impact of an intervention via structured interviews and systematic analysis of causal statements. A QuIP interview guide uses both open-ended and closed questions to assess perceived change across pre-agreed outcome domains which reflect the theory of change.

In this evaluation, the evaluation team conducted 20 in-depth interviews with participating students. The interviews explored the students’ experiences and perspectives on university life and their engagement with their academic studies and extra-curricular activities. This helped provide a broader context within which to understand how the intervention has affected the students. These interviews were designed to capture stories of change, which allow for detailed insights into the personal impact of the Boundary Spanner interventions on various aspects of students’ lives, such as course engagement and social life. Participants were not initially asked directly about the intervention, but were asked about it at the end of the interview if they had not mentioned it. Additional key informant interviews with student coaches and delivery staff provided further process-orientated insights into how the intervention ran and how staff involvement may have affected the student outcomes.

The evaluation aimed to test four anticipated causal pathways that explain why it is expected that the Boundary Spanner project will lead to its hypothesised impacts and outcomes. Due to implementation issues, only three of the pathways could be fully tested. These are described below. Please see the technical report for a detailed description of all the causal pathways.

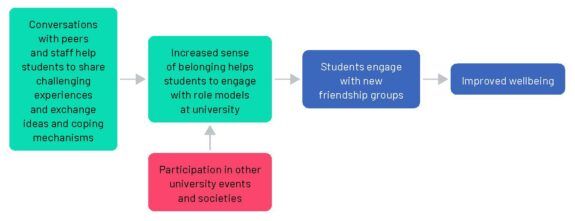

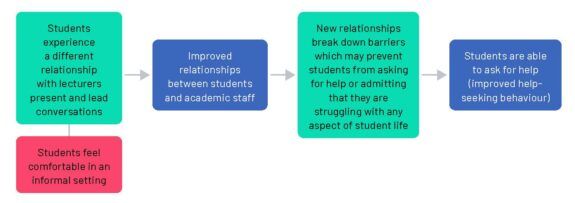

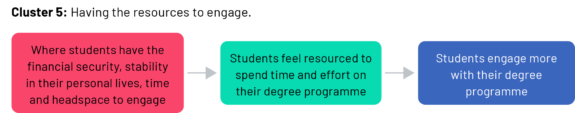

The finalised and tested causal pathways are below. Mechanisms are depicted in green, context in pink and outcomes in blue.

Key

Causal pathway 1: Confidence building and new networks

Causal pathway 2: Breaking down barriers between staff and students improves relationships and help-seeking behaviours.

Causal pathway 3: Better student engagement

Main findings

- The intervention provided students with a social space to make connections and develop friendships with peers, leading to an increased sense of belonging at university.

- The role of facilitators was crucial in providing informal academic and wellbeing support to students.

- The gym and art activities themselves held intrinsic value and supported student wellbeing.

- There was little evidence to suggest that the intervention increased students’ awareness of formal support services.

- Other factors such as university events, academic staff and wellbeing services were also reported to be influencing the key intended outcomes. For social and wellbeing outcomes, the intervention was the most frequently reported driver of change, but academic outcomes were more likely to be influenced by other factors.

Conclusions

This evaluation found evidence to validate three of the four causal pathways within the theory of change. The intervention had a significant positive impact on social and wellbeing outcomes for many students, but could be strengthened by improving staff engagement and administrative support.

The evaluation found that the facilitators and the social space helped students to develop a sense of belonging, which improved wellbeing. However, the reliance on particular staff in the face of resourcing and implementation challenges put the sustainability of an intervention run in this way into question.

Issues of wider staff engagement and student recruitment to the intervention also affected the evaluation, meaning that there was a smaller sample of more engaged students. These implementation issues also meant that the St Mary’s team was not able to provide complete Boundary Spanner attendance data. This limited the possibility of substantiating the causal pathways as it was difficult to test and comment in any detail on the engagement levels of students.

Case study 2: A realist evaluation of Coaching the Gap at Leeds Trinity University

This design has been pre-registered on the Open Science Framework (OSF)

For more details, please see the technical report.

Evaluators: Amira Tharani and Emma Roberts

Project contributors:

- Leeds Trinity University: Syra Shakir and Ruth Squire

- Leeds Trinity University student researchers: Okwuchi Osondu and Lewis Miles-Berry

Intervention

One-to-one online coaching sessions were offered by an external, specialist provider, with global majority coaches holding nationally accredited foundational coaching qualifications. The intervention targeted global majority students at Level 5 or Level 6 who were considered at risk of disengagement. Students were put forward for the intervention by the student support and wellbeing team, as well as personal tutors. This resulted in an initial longlist of 70 eligible students who were invited to sign up to the intervention. Funding restricted the intervention to 30 students.

The associate professor involved in designing the intervention also ran introductory workshops to inform the students about coaching. They were supported by the student liaison and engagement officer to make proactive contact with all students, helping them to book their first session. Students were matched to a coach and then booked their sessions online. The associate professor checked in on students during the coaching programme, especially if they missed or rescheduled sessions.

Students were entitled to six sessions, at a pace of their own choosing. On implementation, it emerged that some students asked and received more than six sessions, at the coaches’ discretion. Students were encouraged to set their own personal goals for coaching in the first session. The intervention was delivered once between October 2024 and April 2025, with each online coaching session lasting one hour.

Note: The term ‘global majority’ has been used here as determined by the evaluator team to refer to people who are Black, Asian, Brown, mixed heritage, indigenous to the global south, or have been racialised as ‘ethnic minorities’.

Note: Level 5 refers to the second year of an undergraduate course or qualifications equivalent to a Foundation Degree, Higher National Diploma or Diploma of Higher Education. Level 6 refers to the final year of a Bachelor’s degree.

Evaluation

In realist evaluation, causal pathways are conceptualised as ‘context – mechanism – outcome’ (CMO) clusters, or ‘intervention – context – mechanism – outcome’ (ICMO) clusters. The primary focus of the evaluation is testing whether the CMO clusters are credible and supported by the evidence generated by the evaluation (Pawson and Tilley, 1997).

This evaluation was mixed-methods, with some quantitative engagement and administrative data collection. Realist interviews were conducted by the evaluators with students who received the coaching sessions, as well as coaches and key personnel from the university team. Realist interviews test each cluster in their questions by describing the hypothesised causal pathway or a part of a causal pathway and asking open questions to elucidate the participant’s experience of it (Greenhalgh et al. 2017; Manzano, 2016). Peer researchers were also involved, but only in the analysis and refinement phases of the evaluation, as they were not available earlier on in the process.

There are some caveats to the evaluation as it was not possible to triangulate some of the data due to conflicting timelines and data availability. This included triangulating the interview data with the university engagement and attainment data, and the coaching provider’s survey of students’ self-rating against their coaching goals. There were also some variations in the delivery, with some students having more than six sessions. Nonetheless, the evaluators interviewed two thirds of participants involved in the intervention, providing a complex picture that was then reflected back to the implementation team and the peer researchers.

The evaluation tested five clusters that were developed in initial theory of change workshops involving university staff, the coaching provider and the TASO team. The five clusters were then developed and tested during the evaluation.

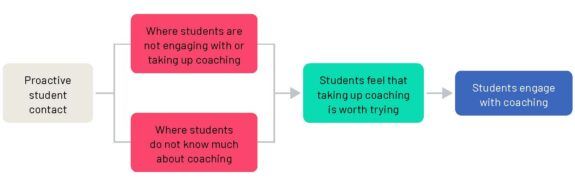

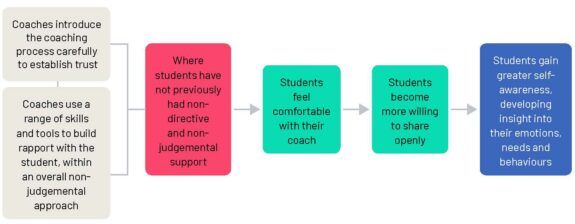

Below are the finalised clusters. Please note that the intervention factor (grey) was conceptualised as working in conjunction with the context (pink), and that they would contribute to the mechanisms (green) and outcomes (blue) together.

Key

Cluster 1: Supporting student engagement in coaching

Cluster 2: Psychological safety in the coaching room

Cluster 3: Developing self-awareness in the coaching room

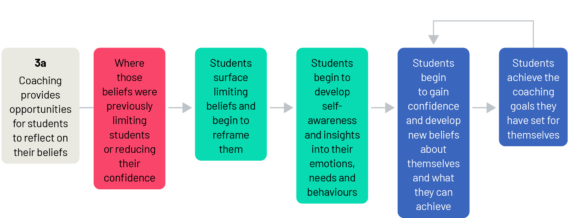

Cluster 3a: Reflecting on limiting beliefs

Cluster 3b: Setting and tracking goals

Cluster 3c: Building helpful skills and habits

Cluster 4: Psychological safety outside the coaching room

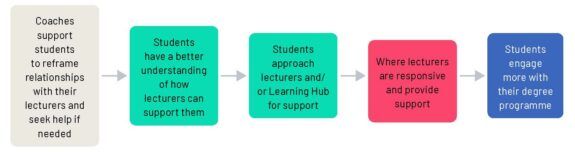

Cluster 5: Having the resources to engage

Main findings

- Proactive outreach helped students engage with coaching, particularly those living in difficult circumstances.

- The strength of the relationship between coach and student was a key enabler of self-awareness.

- Coaching supported students to achieve their goals through several separate but interconnected pathways:

- Breaking down goals into manageable steps

- Having someone to whom they were accountable

- Developing supportive skills and habits

- Surfacing and reframing limiting beliefs.

- Coaching supported students to engage more in their degree programme by submitting work earlier, attending more lectures, and proactively seeking academic help from lecturers and tutors.

- Students’ personal circumstances impacted engagement in their degree programme. These included students’ and their families’ health, bereavement, caring responsibilities and financial difficulties. Coaching helped students in some circumstances to cope, but where difficulties were more severe, students tended to step back from engagement in coaching.

Conclusions

This evaluation confirmed most of the causal pathways outlined in the theory of change, with some amendments. Relationships emerged as central in this evaluation. The relationships between coaches and students were crucial to developing trust and accountability that enabled students to make the changes they needed and embed the new organisational habits and study skills they learned. The student-coach relationship was sometimes enabled by the relationship between the students and the associate professor, with the associate professor offering students proactive support, and it also enabled the building of further relationships between students and other academic and support staff.

For students with difficult life circumstances, other support systems such as financial support may be needed before engaging with coaching. Coaching emerged as an effective way to help individual students better manage their relationships, time and goals. However, for those needing help to navigate difficult life circumstances, coaching is no substitute for whole university approaches that tackle systemic inequality and racism.

References

Befani, B. (2012) Models of causality and causal inference. Department for International Development (DfID). https://mande.co.uk/wp-content/uploads/2022/06/Modelsof-Causality-and-Causal-Inference.pdf [Accessed 16 February 2026]

Befani, B. and Mayne, J. (2014) Process tracing and contribution analysis: a combined approach to generative causal inference for impact evaluation. IDS Bulletin 45(6): 17–36. https://doi.org/10.1111/1759-5436.12110

Finan, S. and Foulkes, L. (2025) Disingenuous “box‐ticking”: Undergraduate students’ attitudes towards University Mental Health Awareness Efforts, British Educational Research Journal. https://doi.org/10.1002/berj.70042

Greenhalgh, T., Pawson, R., Wong, G., Westhorp, G., Greenhalgh, J., Manzano, A. and Jagosh, J. (2017). The Realist Interview. The RAMESES II Project, National Institute for Health Research. https://www.ramesesproject.org/media/RAMESES_II_Realist_interviewing.pdf [Accessed 16 February 2026]

HM Treasury (2020). The Magenta Book: guidance notes for policy evaluation and analysis. London: HM Treasury. https://assets.publishing.service.gov.uk/media/5e96cab9d3bf7f412b2264b1/HMT_Magenta_Book.pdf [Accessed 16 February 2026]

Hogan, A. J. (2019). Social and medical models of disability and mental health: evolution and renewal. CMAJ : Canadian Medical Association journal = Journal de l’Association Medicale Canadienne, 191(1), E16–E18. https://doi.org/10.1503/cmaj.181008

Jones, C. S. and Bell, H. (2024) Under increasing pressure in the wake of COVID-19: A systematic literature review of the factors affecting UK undergraduates with consideration of engagement, belonging, alienation and resilience. Perspectives: Policy and Practice in Higher Education, 28(3), 141-151. http://dx.doi.org/10.1080/13603108.2024.2317316

Lewis, B. (2025) There’s no magic wand for student wellbeing. Wonkhe. https://wonkhe.com/blogs/theres-no-magic-wand-for-student-wellbeing/ [Accessed 16 February 2026]

McLafferty, M., O’Neill, S. and Murray, E. K. (2024) Policy Brief Student Mental Health: College Student Mental Health and Wellbeing. https://pure.ulster.ac.uk/en/publications/policy-briefstudent-mental-health-college-student-mental-health-/ [Accessed 16 February 2026]

Manzano, A. (2016) The craft of interviewing in realist evaluation. Evaluation, 22, 342-360 https://doi.org/10.1177/1356389016638615

Morrish, A. (2023) HE Providers’ Policies and Practices to Support Student Mental Health. London: Department for Education. https://assets.publishing.service.gov.uk/media/646e0c027dd6e70012a9b2f0/HE_providers__policies_and_practices_to_support_student_mental_health_-_May-2023.pdf [Accessed 16 February 2026]

Newham, J., and Foster, C. (2025) Nudging university students to counselling and mental health services: staff perspectives on the implementation of a proactive well-being analytics intervention. Perspectives: Policy and Practice in Higher Education, 30(1), 58–67. https://doi.org/10.1080/13603108.2025.2565342

Newton, J., Dooris, M. and Wills, J. (2016) Healthy universities: an example of a whole-system healthpromoting setting. Global Health Promotion, 23(1), 57-65. https://doi.org/10.1177/1757975915601037

Pawson, R. (2007) Causality for Beginners. NCRM Research Methods Festival 2008: Leeds University. http://eprints.ncrm.ac.uk/245/. [Accessed 16 February 2026]

Pawson, R. and Tilley, N. (1997) Realistic Evaluation. London: Sage.

Pollard, E., Vanderlayden, J., Alexander, K., Borkin, H. and O’Mahony, J. (2021) Student mental health and wellbeing. London: Department for Education. https://dera.ioe.ac.uk/id/eprint/38141/ [Accessed 16 February 2026]

Robertson, A., Mulcahy, E. and Baars, S. (2022) What works to tackle mental health inequalities in higher education? The Centre for Transforming Access and Student Outcomes in Higher Education (TASO). https://cdn.taso.org.uk/wp-content/uploads/2022-05-17_What-workstackle-mental-health-inequalities-he_TASO.pdf [Accessed 16 February 2026]

Sanders, M. and Ellingwood, J. (2025) Student mental health in 2024: How the situation is changing for LGBTQ+ students. King’s College London, the Policy Institute and Transforming Access and Student Outcomes in Higher Education (TASO). https://cdn.taso.org.uk/wp-content/uploads/2025-02-20_Student-mental-healthin-2024-How-the-situation-is-changing-for-LGBTQ-students_TASO.pdf [Accessed 16 February 2026]